In the rapidly evolving realm of artificial intelligence, Runway’s latest announcement is stirring excitement across the tech and film industries. The AI video startup has rolled out its newest video synthesis model, Gen-4, which is now available to paid users. This latest iteration claims to overcome some of the most challenging obstacles in AI video generation, particularly the consistency of characters and objects across different shots.

A Leap Towards Consistent Digital Storytelling

One of the standout features of Gen-4 is its ability to maintain the integrity of characters and scenes throughout a video. Previous AI-generated films often felt like loosely connected dream sequences, where thematic elements were present but realistic continuity was lacking. With Gen-4, filmmakers can now rely on the software to produce coherent narratives with consistent visual elements.

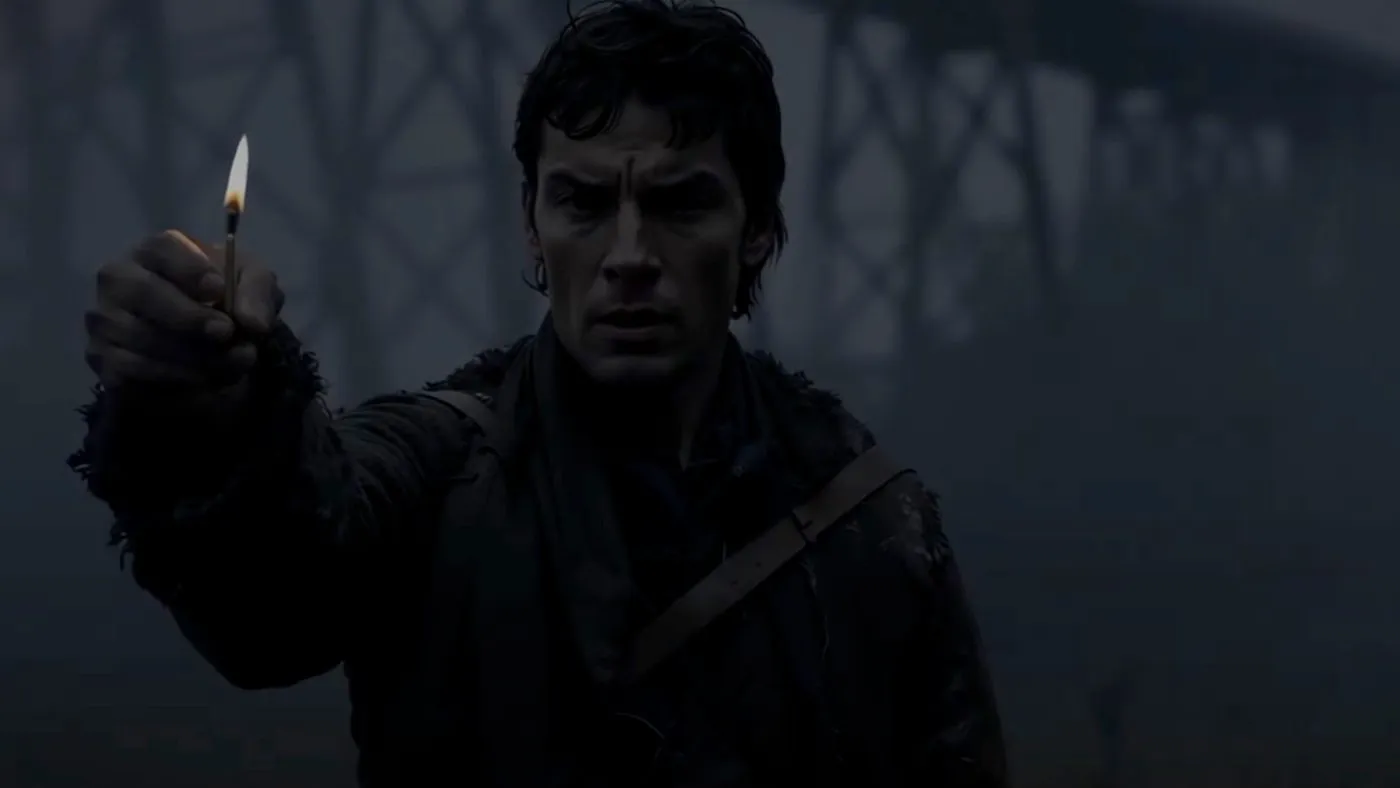

“Runway claims Gen-4 can maintain consistent characters and objects, provided it’s given a single reference image of the character or object in question as part of the project in Runway’s interface,” highlights the innovation brought by this new model. This feature was demonstrated with example videos where the same woman and the same statue appeared across various shots and scenes, maintaining their appearance despite different contexts and lighting conditions.

Enhancing Filmmaker Flexibility and Creative Freedom

Runway’s Gen-4 significantly expands the creative possibilities for filmmakers. Unlike its predecessors, Gen-4 allows for comprehensive coverage of environments or subjects from multiple perspectives within the same sequence. “Gen-2 and Gen-3 were good at maintaining stylistic integrity but fell short in generating multiple angles within the same scene,” a limitation that Gen-4 addresses effectively.

This enhancement not only adds depth to the storytelling but also provides filmmakers with the flexibility to craft more dynamic and engaging narratives. The ability to capture various angles without losing consistency in character and environment depiction is a game-changer in the production of AI-assisted videos.

Runway’s Evolutionary Journey in AI Video Technology

The journey to Gen-4 began with the first publicly available version of Runway’s video synthesis product in February 2023. The initial releases, up to and including Gen-1, were more experimental and served as a learning curve for both the developers and the creative community. Each subsequent model has brought significant improvements, with Gen-3 last year allowing for longer videos of up to 10 seconds with enhanced consistency and coherence.

The introduction of Gen-4 not only marks an important milestone for Runway but also cements its unique position in the competitive landscape of AI video technology. As the industry continues to explore the potential of AI in creative processes, Runway’s commitment to refining and advancing their technology sets a high standard for what is achievable in AI-powered video production.

As we look to the future, the possibilities of AI in filmmaking and content creation are boundless. With tools like Runway’s Gen-4, creators are equipped to explore new horizons in digital storytelling, making it an exciting time for both technology enthusiasts and creative professionals alike.